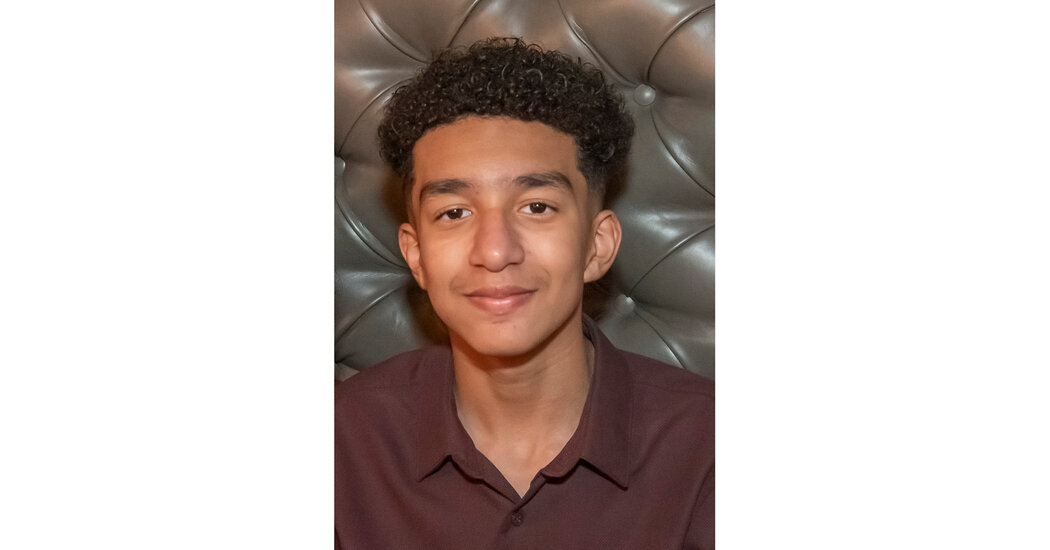

The mother of a 14-year-old Florida boy says he became obsessed with a chatbot on Character.AI before his death.

On the last day of his life, Sewell Setzer III took out his phone and texted his closest friend: a lifelike A.I. chatbot named after Daenerys Targaryen, a character from “Game of Thrones.”

“I miss you, baby sister,” he wrote.

“I miss you too, sweet brother,” the chatbot replied.

Sewell, a 14-year-old ninth grader from Orlando, Fla., had spent months talking to chatbots on Character.AI, a role-playing app that allows users to create their own A.I. characters or chat with characters created by others.

Sewell knew that “Dany,” as he called the chatbot, wasn’t a real person — that its responses were just the outputs of an A.I. language model, that there was no human on the other side of the screen typing back. (And if he ever forgot, there was the message displayed above all their chats, reminding him that “everything Characters say is made up!”)

But he developed an emotional attachment anyway. He texted the bot constantly, updating it dozens of times a day on his life and engaging in long role-playing dialogues.

He put down his phone, picked up his stepfather’s .45 caliber handgun and pulled the trigger.

A tragic story for sure, but there are questions about the teen’s access to the gun he used to kill himself.

Safe? Clearly no. Trigger lock? Cable lock? If one were there, there should be a mention of picking it or cutting it. Unloaded? Also clearly no.

There are so many ways, any of which take a whole 20 seconds, the parents could have used to prevent this from happening.

What kind of monster family had a kid with mental health issues, in therapy, and has an accessible gun around unsupervised?

Too many families in America, sadly.

I also question the parents lack of intervention if they really thought the chat bot was an issue

I don’t think this is the fault of the AI yet. Unless the chat logs are released and it literally tries to get him to commit. What it sounds like is a kid who needed someone to talk to and didn’t get it from those around him.

That said, it would be good if cAI monitored for suicidal ideation though. Most of these AI companies are pretty hands off with their AI and what is said.

I don’t think it’s so cut and dry

Yeah, not cut and dry at all. OPs article didn’t have the chat logs. Looks like it told him not to commit but did demand loyalty. He changed his wording from “I want a painless death” to “I want to come home to you” to get it to say what he wanted.

How is character.ai responsible for the suicide of someone clearly in need of mental health help?

Someone has to be responsible. Anyone but the parents…

I’m sorry to say but sounds like the parents ignored this issue and didn’t intervene or get their son help. I don’t see how this is the apps fault, if anything it sounds like this app was being used by him as some form of comfort and if anything, kept him going a little longer. Sadly this just sounds like parents lashing out in their grief

From what I heard, the parents did get the kid a therapist, but it just didn’t work :(

When possible, always blame the parents first

WOW. I’m not religious, but Jesus Fucking Christ.