Highlights: The White House issued draft rules today that would require federal agencies to evaluate and constantly monitor algorithms used in health care, law enforcement, and housing for potential discrimination or other harmful effects on human rights.

Once in effect, the rules could force changes in US government activity dependent on AI, such as the FBI’s use of face recognition technology, which has been criticized for not taking steps called for by Congress to protect civil liberties. The new rules would require government agencies to assess existing algorithms by August 2024 and stop using any that don’t comply.

I mean that broadly seems like a good thing. Execution is important, but on paper this seems like the kind of forward thinking policy we need

deleted by creator

Quite frankly it didn’t put enough restrictions on the various “national security” agencies, and so while it may help to stem the tide of irresponsible usage by many of the lesser-impact agencies, it doesn’t do the same for the agencies that we know will be the worst offenders (and have been the worst offenders).

“If the benefits do not meaningfully outweigh the risks, agencies should not use the AI,” the memo says. But the draft memo carves out an exemption for models that deal with national security and allows agencies to effectively issue themselves waivers if ending use of an AI model “would create an unacceptable impediment to critical agency operations.”

This tells me that nothing is going to change if people can just say their algoriths would make them too inefficient. Great sentiment but this loophole will make it useless.

This seems to me like an exception that would realistically only apply to the CIA, NSA, and sometimes the FBI. I doubt the Department of Housing and Urban Development will get a pass. Overall seems like a good change in a good direction.

The CIA and NSA are exactly who we don’t want using it though.

Agreed but it’s at least a step forward, setting a precedent for AI in government use. I would love a perfect world where all bills passed are “all or nothing” legislation but realistically this is a good start, and then citizens should demand tighter oversight on national security agencies as the next issue to tackle

“next issue to tackle”

It’s been the next issue to tackle since at least October 26th, 2001. They have no accountability. Adding these carve outs is just making it harder to get accountability.

given the “success” of Israel’s hi tech border fence it seems like bureacracies think tech will work better than actually, you know, resolving/preventing geopolitical problems with diplomacy and intelligence.

I worry these kind of tech solutions become a predictable crutch. Assuming there is some kind of real necessity to these spy programs (debatable) it seems like reliance on data tech can become a weakness as soon as those intending harm understand how it works

Like either of those agencies will let us know what they are doing in the first place.

At a certain level, there are no rules when they never have to tell what they are doing.

Algorithms that gerrymander voting district boundries might be an early battleground.

The early battleground of 2010 when they started using RedMap.

Folksy narrator: “Turns out, the U.S. government can not operate without racism.”

Great sentiment but

It’s not a “great sentiment” - it’s essentially just more of the same liberal “let’s pretend we care by doing something completely ineffective” posturing and little else.

deleted by creator

deleted by creator

Watchmen watching over themselves, what could possibly go wrong right?

Sent to my state representative. Thanks!

deleted by creator

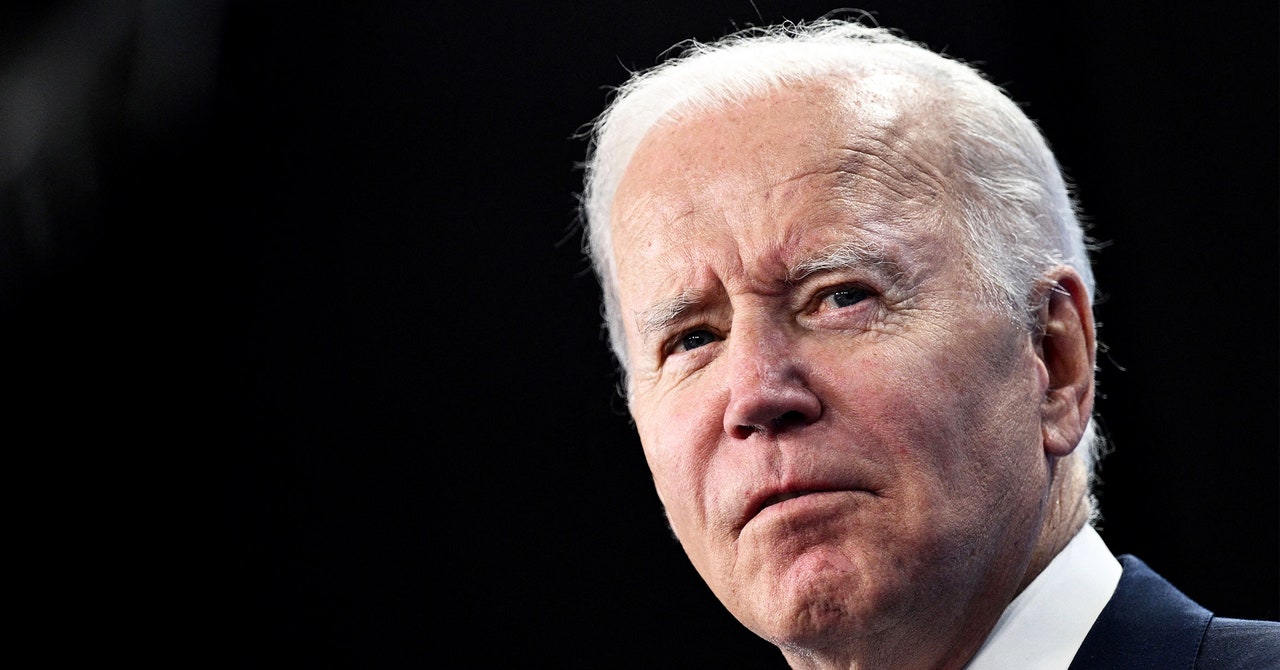

Interesting. I want algorithms to warn us about potential harms by Joe Biden. What if we were able to fund an AI run by the GAO that can tell us when government decisions make the majority of our lives worse?

It’s a long way off and might be a bad idea to trust an AI outright, but I just wish we had a more data informed government.

You might be interested in data.gov. The Obama admin kicked of the Government Open Data Initiative to provide transparency in government. Agencies have been given a means to publish their data, which US taxes pay for. You’d be surprised what’s in there. It’s not an algorithm, but you could certainly build one from that if you wanted to.