Found it first here - https://mastodon.social/@BonehouseWasps/111692479718694120

Not sure if this is the right community to discuss here in Lemmy?

Not as bad as the AI-generated articles showing up in search results. Some websites I get driven to make absolutely no sense, despite a lot of words being written about all kinds of topics.

I’m looking forward to the day when “certified human content” is a thing, and that’s all search engines allow you to see.

I’m looking forward to the day when “certified human content” is a thing, and that’s all search engines allow you to see.

I can’t wait for that. I get the feeling it’s gonna get real messy before we figure out solutions to all the problems caused by AI-generated content.

I mean yeah, there’s already plenty of human-generated misinformation and shit, but it seems to me (not an expert) like ai is capable of fucking with society on a whole new scale.

The big difference is that high quality human generated content is often based on reputation, a history of quality content, and frequently reviewed by experts in the field (very common for medical articles).

But AI has none of that. It’s 100% quantity over quality, and that’s just internet pollution as far as I’m concerned.

We really do have to figure something out, though.

https://mashable.com/article/world-of-warcraft-wow-reddit-ai-glorbo

Reddit already tricked a bot into writing an entire article when they noticed a website was clearly scraping /r/wow

deleted by creator

They’ll just make certification so expensive only the wealthy will qualify.

You’ll never hear another perspective again.

I mean, they would have started appearing in there from the first moment that someone created one and hosted it somewhere, no? So it’s already been a thing for a couple years now, I believe.

AI generation sites about to become Pinterest 2.0 for clogging up search results.

I hate so much how pinterest occludes and pollutes google images 🙄

The AI centipede

Google is a search engine, it shows stuff hosted on the Internet. If these AI generated images are hosted on the Internet, Google should show them.

Except is VERY heavily weights certain sources.

deleted by creator

Everything has been fake since the invention of photography. The degree varies, but images have never been used in mass media to document the truth in any way shape or form, and especially not on the click-driven Internet and doubly so on Google Images. Even if an image comes right from the camera, you still have heavy bias in the selection process of what images get shown to begin with and which remain hidden.

If you are looking for truth in photography, you are about a 150 years too late.

Well, of course. The search algorithm has no way to know the difference.

Thank you for circling the largest photo, my eyes didn’t know where to go #bless 🙏

Not my original content, I just saw this post from Mastodon

I wonder what would happen in the future as future AI’s get trained with AI generated images that they got from the internet. Would the generated images start to degrade or have somekind of distinct style pop out.

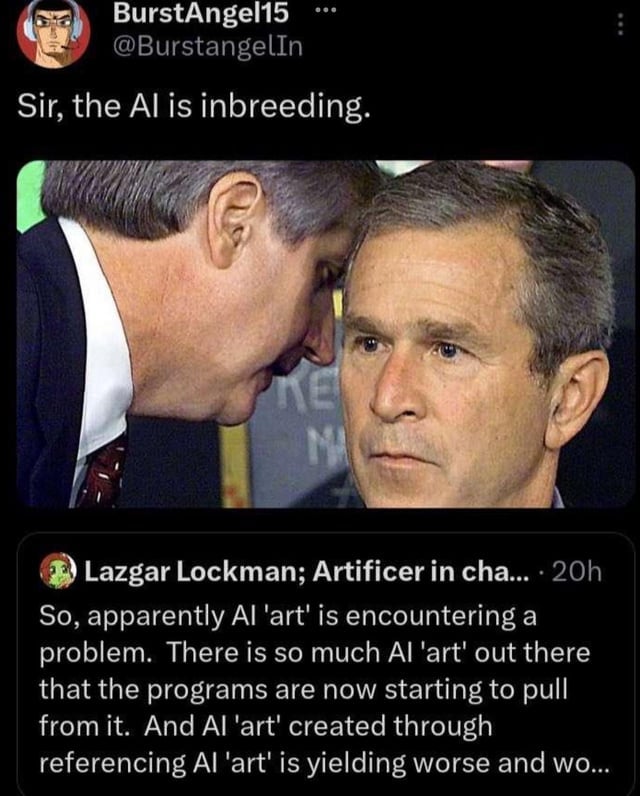

You mean like this?

Yeah something like that. I imagine it would be something like jpeg which degrades as you keep converting over and over. But not sure how would AI generated images would look like.

Not really. Check midjourney v6 generated images. I found many images, which look undistinctable from real images. So i dont see, why image generation should get worse. What matters is the dataset and only dataset. It doesnt matter if the model is trained on ai images, as long as the dataset is good

Just wanted to point out that the Pinterest examples are conflating two distinct issues: low-quality results polluting our searches (in that they are visibly AI-generated) and images that are not “true” but very convincing,

The first one (search results quality) should theoretically be Google’s main job, except that they’ve never been great at it with images. Better quality results should get closer to the top as the algorithm and some manual editing do their job; crappy images (including bad AI ones) should move towards the bottom.

The latter issue (“reality” of the result) is the one I find more concerning. As AI-generated results get better and harder to tell from reality, how would we know that the search results for anything isn’t a convincing spoof just coughed up by an AI? But I’m not sure this is a search-engine or even an Internet-specific issue. The internet is clearly more efficient in spreading information quickly, but any video seen on TV or image quoted in a scientific article has to be viewed much more skeptically now.

Have been for a while. Pretty annoying and I wish you could filter them out.

The Google AI that pre-loads the results query isn’t able to distinguish real photos from fake AI generated photos. So there’s no way to filter out all the trash, because we’ve made generative AI just good enough to snooker search AI.

A lot of them mention they’re using an AI art generator in the description. Even only filtering out self-reported ones would be useful.

That still requires a uniform method of tagging art as such. Which is absolutely a thing that could be done, but there’s no upside to the effort. If your images all get tagged “AI” and another generator’s doesn’t, what benefit is that to you? That’s before we even get into what digital standard gets used in the tagging. Do we assign this to the image itself (making it more reliable but also more difficult to implement)? As structured metadata (making it easier to apply, but also easier to spoof or scrape off)? Or is Google just expected to parse this information from a kaleidoscope of generating and hosting standards?

Times like this, it would be helpful for - say - the FCC or ICANN to get involved. But that would be Big Government Overreach, so it ain’t going to happen.

Reality:

the internet is really going to need some kind of centralized hash signature authority

What the hell is going on with that mustache?

deleted by creator

Something posted on the internet is available by searching on google! The world is ending!