What is amazing in this case is that they achieved spending a fraction of the inference cost that OpenAI is paying.

Plus they are a lot cheaper too. But I am pretty sure that the American government will ban them in no time, citing national security concerns, etc.

Nevertheless, I think we need more open source models.

Not to mention that NVIDIA also needs to be brought to earth.

This sounds like good engineering, but surely there’s not a big gap with their competitors. They are spending tens of millions on hardware and energy, and this is something a handful of (very good) programmers should be able to pull off.

Unless I’m missing something, It’s the sort of thing that’s done all the time on console games.

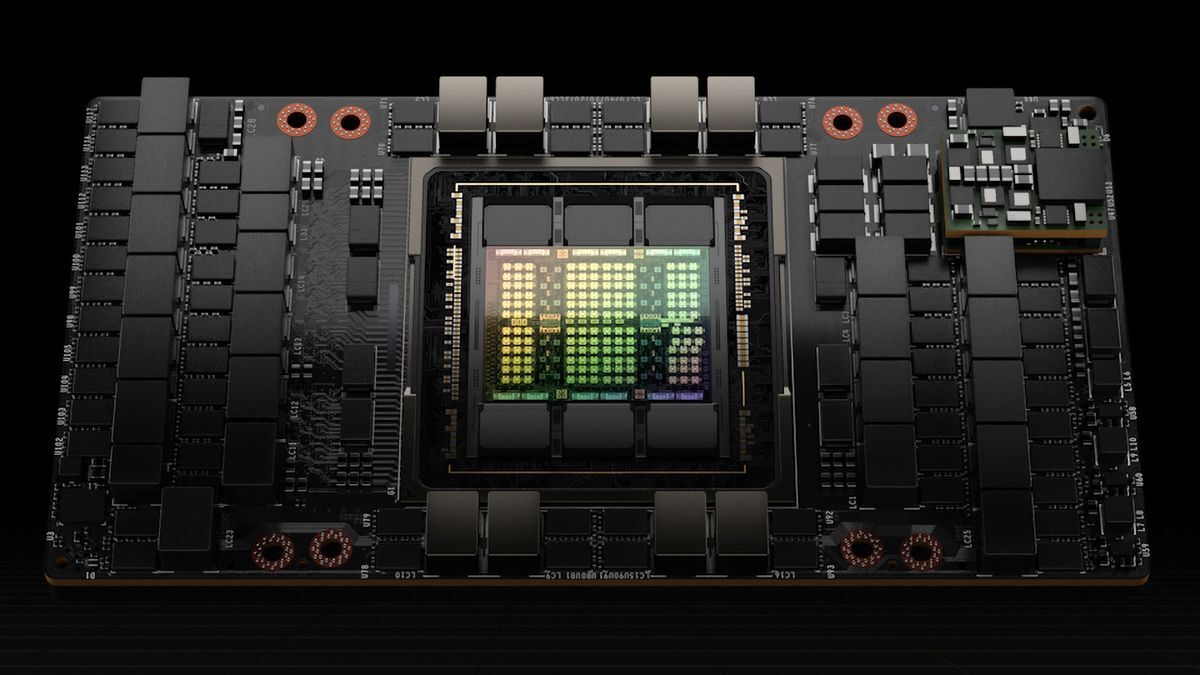

Part of this was an optimization that was necessary due to their resource restrictions. Chinese firms can only purchase H800 GPUs instead of H200 or H100. These have much slower inter-GPU communication (less than half the bandwidth!) as a result of export bans by the US government, so this optimization was done to try and alleviate some of that bottleneck. It’s unclear to me if this type of optimization would make as big of a difference for a lab using H100s/H200s; my guess is that it probably matters less.

deleted by creator

This is why Nvidia stock has been hit so hard. CUDA is their moat